Special Episode

This week’s edition of AI, Software, and Wetware is a written interview with Karo Zieminski, so there’s no podcast audio for it. Instead, we’re celebrating our 100th interview with a special episode: Lakshmi Veeramani interviews AISW host Karen Smiley about what she’s seen and learned in these first 100 interviews.

This interview is available as an audio recording (embedded here in the post, and later in our AI6P external podcasts). This post includes the full, human-edited transcript. (If it doesn’t fit in your email client, click HERE to read the whole post online.)

Interview - Karen Smiley and Lakshmi Veeramani

Karen: Hi everyone! This week marks the 100th interview in the “AI, Software, and Wetware” series. My interview with my 100th guest, Karolina Zieminski of Denmark, is written, so there’s no podcast episode for it; audio is available via read-aloud on Substack. So today, Lakshmi Veeramani is interviewing me about the series.

Lakshmi: We are celebrating someone whose voice has helped to shape important conversations around ethical AI, responsible technology, and the human values that must guide innovation. Through her AI, Software, and Wetware series, Karen has created a space where thoughtful dialogue, curiosity, and integrity come together to explore the future of AI in a meaningful way.

But what makes Karen truly remarkable is not just the conversations she hosts, it’s the community she builds. Her passion for empowering others led to bringing together 26 incredible woman leaders to share their voices in a collaborative book, creating a platform where ideas, experiences, and leadership could shine together.

Karen leads with kindness, curiosity, and purpose. She reminds us that behind every conversation about technology, there must also be conversations about humanity, ethics, and inclusion as she reaches the milestone of her hundredth interview. This is a moment to reflect on the journey, the impact, and the many lives she has touched through her work.

Karen, thank you for the inspiration you bring to the world, and I’m truly honored to have this conversation with you today. My first question, what inspired you to start this interview series in the first place, and what did mean to you to create a space where voices from everywhere could be heard?

Karen: “AI, Software, and Wetware” was inspired when I was chatting with Amanda Rose Fadely about how the voices of women in underrepresented groups were, and still are being drowned out in the conversations about AI. The uber-rich, white, US-based techbros were taking up all of the oxygen. At the same time, most of their messaging was blatant hype about AI, which I felt was misleading and unfair to people. From working in global companies for over 20 years. I knew that views and experiences outside the US could be quite different and deserve to be heard.

I also started hearing rumbles about “Why don’t women use AI more?”, which just didn’t fit with my work experiences or how easily I was finding women writing about AI on Substack. Amanda suggested that maybe I could do something about the gap to talk and write about how real people were actually using AI or not, to get beyond the height to help highlight those quieter voices. And her suggestion got a quick ‘yes’ from me.

Now, I’m pretty introverted by nature. I’d never hosted a podcast before, and I’d only been a podcast guest once for an internal company audience. But I’ve done public speaking for years. I was a debater in high school. And I gave many conference presentations and taught courses during my corporate career. Starting a podcast and becoming an interviewer felt like a stretch that I could handle.

So I did some research and decided to host and publish semi-structured interviews. I drafted some questions, messaged a few women in AI that I had already connected with, got a good quality microphone, learned how to use Audacity, and boom: my “AI, Software, and Wetware” podcast was off and running in August 2024.

Some of the 100 interviews have been written, and nine have even been anonymous because the guests preferred it, but most of them have been audio, and published here on this podcast. I do seek out men as guests too, because many men’s voices have also been overlooked or stifled, especially in creative roles. About two-thirds of my guests have been women, though. I monitor my guest demographic data and sometimes go out of my way to find male and non-US guests to provide balance. I’m especially looking for guests from the global south. If anyone’s interested in being featured as a guest in the future, please see my guest FAQ!

Lakshmi: Okay. After 100 conversations about ethical AI, what moment or story stayed with you the most and reminded you why this work truly matters?

Karen: Oh, wow. It’s way too hard to pick out one story. I think my biggest takeaway is that I learned something from everyone. Not so much about technical aspects of AI, although sometimes I do pick up tech tips and tool suggestions. This series is mostly about people, though, how and why they do and don’t use AI the way they do, and how AI and data are affecting their lives.

I find myself referring back to my guests’ posts and sharing things I’ve learned from them. For example, I started out feeling very strongly about avoiding unethical AI tools, and I still do. But that has been tempered with understanding how judging people who use them could be ableism, which I definitely don’t want to practice. Or it could be a form of unfair discrimination, which I also don’t want to practice. So being able to use AI tools, or to avoid choosing unethically-developed tools, are both forms of privilege, and I’ve become more sensitive to that, thanks to my guests. I’ve posted a few times about why we should stop shaming people who use AI tools — or shaming folks who don’t use AI tools. I’m still all in on shaming companies who don’t act ethically, though.

Another thing I learned that has stayed with me is how the ethicality of AI is so much more than just whether the system was trained on data that was unethically sourced, i.e. stolen. As I conducted these AISW interviews and wrote the articles that led to my Everyday Ethical AI book, the reactions I got reinforced that most people just weren’t aware of these aspects, and this strengthened my resolve to help raise awareness and to amplify those voices. Learning about exploitative mineral mining and data labeling practices worldwide from Rebecca Mbaya and others was also a big eye opener for me.

Lakshmi: Yeah, that’s an awesome reply. When we talk about ethical AI, the conversation often focuses on policies and governance. How should ethical AI translate into real positive experiences for the ordinary people in their day-to-day lives? As you correctly pointed out in your article “But I don’t use AI”, you show how AI is already present in things like smartphone, social media, feeds, navigation apps, and streaming recommendations.

Karen: Well, “policies” and “governance” sound complicated, and in corporate environments they can be. But in day-to-day life, we all have policies too. We just don’t usually write them down. At the heart, policies come down to decisions that we make day-to-day, what we value, and how we evaluate the trade-offs among our choices in alignment with our values. I ended up writing a lot about this in Everyday Ethical AI and recommending practical steps people can take: to protect themselves and their families and businesses, and to make decisions that align with their values.

In these first 100 interviews, the one value that almost every AISW guest calls out is transparency. People deserve to have choices about how and if their data is used. We deserve to have informed consent. People deserve to be credited and compensated for the use of their works. These rights are the 3Cs that CIPRI advocates. (That’s the Cultural Intellectual Property Rights Initiative.) And I support this 100%. Without transparency, though, there is no way to know if we have our 3Cs rights or if we’re just being exploited. And most AI companies or systems are not transparent. With for-profit companies, the business models generally don’t reward companies for being transparent. And they do what they’re rewarded for doing. It’s that simple.

So in a lot of situations, we have limited choices, or we aren’t aware of the consequences of those choices. This is why I focus on helping people realize what kinds of questions they can ask and learn what the risks are, to help them make better informed decisions in their day-to-day lives.

Lakshmi: After 100 conversations, what’s the most valuable lesson you have learned? Not just about your guests, but about yourself?

Karen: That it’s harder than it looks to be an objective interviewer and not inject my own bias into the conversations. And that I had some blind spots that I didn’t realize I had about ways in which the ethical discussions around using AI or not could be ableist or discriminatory. I’m grateful to everyone who was both candid and kind about helping me become aware of those concerns.

I also want to call out you and Professor Katrin Fischer and Professor Mary Marcel for their collaboration with me on analyzing the data from these interviews and helping to highlight some insights about the way that people think, whether are there differences by gender, by location, and things like that. It was very helpful for me in understanding. And Katrin actually helped to point out to me that my questions could feel leading, as far as prompting the guest towards a direction that aligned with my own beliefs. And I’ve been working to address it in my more recent interviews.

Lakshmi: That is good to know. Across 100 episodes, have you noticed a recurring trait or mindset among the people who are building meaningful work? If yes, what is that trait or mindset?

Karen: Hmm. That’s hard to say. I think it would have to be a combination of a learning mindset and a sense of having a mission or purpose in life that lines up with their values. There is probably some self-selection bias among my set of guests, though, because offhand, I can’t think of one that didn’t have that learning mindset and a sense of mission, something that they’re passionate about.

Having said that, they might not bring a learning mindset to all aspects of their life, including maybe not trying to keep up with technology. Technology and AI might be secondary. But they do have that mindset for their life mission, and that is where their most meaningful work comes from.

That combination of learning mindset and a sense of mission might not be representative of the overall population, so I probably couldn’t base any strong conclusions about correlation on just my 100 interview guests.

Lakshmi: Okay. How has your definition of your good conversation changed from episode one to episode 100?

Karen: For this interview series, my overall definition of a good conversation is one that is both candid and safe, and I don’t think that’s changed. I have had a few podcast-proficient guests, but most of my guests are new to being interviewed. So making it a safe experience for them, maybe even fun, is important to me.

Part of a good conversation has been me just learning how to be a good interviewer. I hope I’ve gotten better over the last 100 interviews at asking follow-up questions, keeping track of points my guests raise in live conversations that I want to ask them to elaborate on and such. I’m still trying to learn not to talk quite as fast, but I think that will take a while. My guests are so interesting and there are always more points I’d love to explore with them than we have time to cover! I’ll keep working on that though.

Lakshmi: Absolutely. Women need more sponsors more than mentors. I, myself, see you as my sponsor. You have consistently created platforms for others to share their perspectives. What have you learned about leadership through empowering other women?

Karen: I like the saying, “Be the person you needed when you were younger.” For over three decades, I studied and worked in mostly male-dominated environments and only occasionally had sponsorship. I never did get to have a woman as my manager or mentor, and I wished I had. So I try to be that kind of person for the women I care about, which includes you.

The hard part is trying not to default to giving you or anyone the kind of sponsorship I had wanted, but the kind of sponsorship you want or need. It’s more like the platinum rule than the golden rule, you could say.

I had worked as a software developer for some years before I became a people manager in the late 1990s. Then I moved into research for quite a while in the early 2000s. One of the reasons I moved back into corporate leadership roles in the late 2010s was from reflecting on how I could have the most impact. As a leader and people manager, I could model how to hire inclusively. I could prove that diverse teams perform better than non-diverse teams. I could show how women can be effective leaders and mentors and sponsors without acting like men. And every hour that I put into supporting women on my teams could have much more of an effect on them, and then on the world, than me spending that hour on my own hands-on contributions and my own career progression.

And the same now holds for working on these AI, Software, and Wetware interviews, or on the SheWritesAI books and digests. I can spend 10 or 40 hours writing my own articles, or I can spend those 10 or 40 hours interviewing and promoting the writing and work of other women and underrepresented folks. If I could help them get established and find their audiences and voices, I think that could have a multiplier effect and a bigger net positive impact on the world. So that is where I’m putting most of my energy and hours nowadays.

Lakshmi: Thank you for making the positive impact on the world. My next question is: As AI becomes more powerful and integrated into society, what human values do you believe we must protect the most?

Karen: That’s a hard question in a way, because the easy answer is “what I value most”. But one insight I’ve learned from my guests, especially Rebecca Mbaya, is that other societies and people have different values that aren’t currently reflected in most of the available AI tools, and those values are important too.

So I guess my answer is that we must protect and support the values of self-determination and respect, and not just within the current AI tool value systems and frameworks. Everyone deserves to have AI tool choices that reflect and respect their value systems.

Lakshmi: Yeah. Thanks for Rebecca sharing some questions. The next question is: During your journey with the interview series and the book, has there been a moment when someone shared that your work impacted them, in a way that deeply moved you?

Karen: Definitely more than one. People were so kind. I think the biggest impacts I hear about are from the standard questions that I ask. Sometimes people just don’t know about the ethical concerns or the privacy risks because the voices in society that talk about them are drowned out by the hypesters. And once people see and hear about those risks and ethical issues, they generally can’t unsee them or unhear them. They take away this awareness that they didn’t have before. I often hear from people about this later.

That’s what I hoped my Everyday Ethical AI book would do too. I wrote it as a way to reach more people than I can reach with the one-on-one interviews or even with my podcast, and I hope that the She Writes AI Everywhere series will reach even more people in more places.

Lakshmi: I am happy to know that. The next question is: Your interviews encourage thoughtful and sometimes challenging conversations. What motivates you to keep asking the questions that matter?

Karen: Because they matter. I mean, it helps that I’m now privileged enough to be able to ask those challenging questions without risking my livelihood. I try to do it in a non-judgmental way that encourages people to feel safe, to reflect and respond, so that we both take away some new insights from our conversations. There’s already too much judging and not enough listening and learning. We need less heat and more light.

I personally can’t do everything that I’d like to do to make the world a better place. But I can do this, so I choose to do it. I like the saying, “Somebody should do something. And I am somebody. So I’m going to do something.”

Lakshmi: Yeah, that is inspiring, Karen. What advice would you give to the young women who want to enter in the field of AI, but may not see themselves as belonging in this space?

Karen: Short answer: You belong. You don’t need anyone’s permission. Find inspiration in the women who have not waited for permission, or who have stopped waiting. Seek out the other women, and in some cases girls, who are in the AI space. The She Writes AI community is a great place to look. And Celeste Garcia is doing these amazing profiles on important women in the history of AI technologies-- women that most people don’t know enough about. They are in the Herstory section of the She Writes AI newsletter.

Lakshmi: Yes, we all truly belong. Thank you for that. Looking ahead, what kind of impact or legacy would you hope your conversations about ethical AI leave for future generations?

Karen: I would love to think that future generations will look at ethical values-based decision making as obvious, about AI and everything else. Like, “Well, of course we should do that.” Where kids grow up learning about making respectful, thoughtful, ethical choices, so that we don’t even have to think about it consciously; it’s like breathing. I don’t think I will live long enough to see that day, and my own legacy and contributions are probably just a drop in a very big bucket. But every drop counts.

And I hope that every person I can support, everyone who gets a boost to their confidence in their career, to build their voices and skills and influence how AI is developed and used, can pay it forward and support others to build their voices and skills — and create more drops. That I do hope to live to see.

Lakshmi: Yeah. I love your caring for the future generations. Thank you. As you reach this incredible milestone of 100 interviews, if someone reading or listening today carries forward just one idea or value from your work on ethical AI and empowerment, what would you hope it is? And thanks Rebecca for the question.

Karen: Hmm, good question. I think it would be what I discussed with my friend Jax in their PLANET podcast: that we all have agency.

We have some power, as individual consumers and collectively, and we shouldn’t give up so easily on influencing the way AI is developed and used. We all make decisions every day. We have some choices, and those choices matter. Just look at the QuitGPT movement, as an example. People are voting with their money and time to move away from ChatGPT to other platforms. And it’s showing up; it’s making a difference.

The more we learn about our options, about how they align with our values and about the consequences of our choices, the better the decisions we can make for ourselves and for our families, and our businesses. So keep learning, keep listening. Keep an open mind, and be prepared for your views to evolve as you learn.

Lakshmi: Thank you, Karen. This is wonderful talking to you, as always. Hope to see many more hundreds of podcasts coming from you and make a impact in the AI world. Thank you.

Karen: Thank you so much, Lakshmi. This has been a lot of fun. I appreciate you making the time for the interview with me. Thank you!

Interview References and Links

Karen’s books:

Everyday Ethical AI: A Guide For Families & Small Businesses, Sept. 2025

AI Everywhere, Volume 1: How Women Are Changing The World With Artificial Intelligence, March 2026 [26 authors from 14 countries; first book in the She Writes AI Everywhere series]

Authors’ newsletters:

About this interview series and newsletter

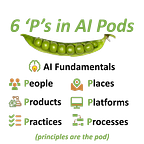

This post is part of our AI6P interview series on “AI, Software, and Wetware”. It showcases how real people around the world are using their wetware (brains and human intelligence) with AI-based software tools, or are being affected by AI.

And we’re all being affected by AI nowadays in our daily lives, perhaps more than we realize. For some examples, see post “But I Don’t Use AI”.

We want to hear from a diverse pool of people worldwide in a variety of roles. (No technical experience with AI is required.) If you’re interested in being a featured interview guest, anonymous or with credit, please check out our guest FAQ and get in touch!

6 'P's in AI Pods (AI6P) is a 100% human-authored, 100% reader-supported publication. (No ads, no affiliate links, no paywalls on new posts). All new posts are FREE to read and listen to. To automatically receive new AI6P posts and support our work, consider becoming a subscriber:

Series Credits and References

Disclaimer: This content is for informational purposes only and does not and should not be considered professional advice. Information is believed to be current at the time of publication but may become outdated. Please verify details before relying on it. All works, downloads, and services provided through 6 'P's in AI Pods (AI6P) publication are subject to the Publisher Terms available here. By using this content you agree to the Publisher Terms.

Audio Sound Effect from Pixabay

Microphone photo by Michal Czyz on Unsplash (contact Michal Czyz on LinkedIn)

Credit to CIPRI (Cultural Intellectual Property Rights Initiative®) for their “3Cs' Rule: Consent. Credit. Compensation©.”

Credit to Beth Spencer for the “Created With Human Intelligence” badge we use to reflect our commitment that content in these interviews will be human-created:

If you enjoyed this interview, we would love to have your support via a heart, share, restack, or Note! (One-time tips or voluntary donations via paid subscription are always welcome and appreciated, too 😊)